The project this week was to convert my lab environment from NSX-V to NSX-T to support some upcoming projects.

Apparently unconfiguring the hosts through the NSX-V interface didn’t work as expected, and in fact didn’t remove all of the NSX-V components.

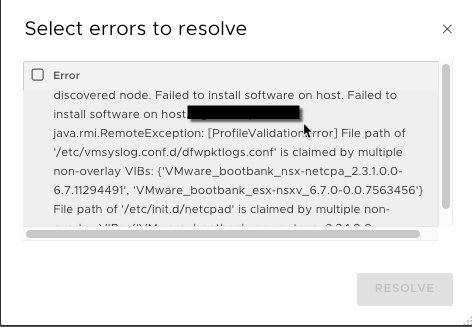

When trying to install NSX-T (2.3.1) on my vSphere 6.7 hosts, I received the error ‘File path of /etc/vmsyslog.conf.d/dfwpktlogs.conf is claimed by multiple none-overlay VIBS’.

After logging into one of the hosts and running ‘esxcli software vib list | grep -i nsx’ I discovered a stray NSX package called esx-nsxv.

#esxcli software vib list | grep -i nsx esx-nsxv 6.7.0-0.0.7563456 VMware VMwareCertified 2019-03-08

This was quickly rectified by placing the host in maintenance mode, then running the following command.

#esxcli software vib remove -n esx-nsxv

Which spit out the following.

Removal Result Message: Operation finished successfully. Reboot Required: false VIBs Installed: VIBs Removed: VMware_bootbank_esx-nsxv_6.7.0-0.0.7563456 VIBs Skipped:

After exiting maintenance mode I retried the installation by selecting ‘Resolve’ in NSX-T.

NSX-T installation on the host then completed successfully.